So how did this start? Actually all of us—Solon, Sophie, and many other fellows and research, not just at PRG, the Information Law Institute, but also at MCC—we’ve been studying computation, automation, and control in different forms for quite a long time. But it was only at the end of last summer really that we realized that there’s this new notion of the algorithm gaining currency.

Archive (Page 1 of 4)

In the next ten years we will see data-driven technologies reconfigure systems in many different sectors, from autonomous vehicles to personalized learning, predictive policing, to precision medicine. While the advances that we will see will create phenomenal new opportunities, they will also create new challenges—and new worries—and it behooves us to start grappling with these issues now so that we can build healthy sociotechnical systems.

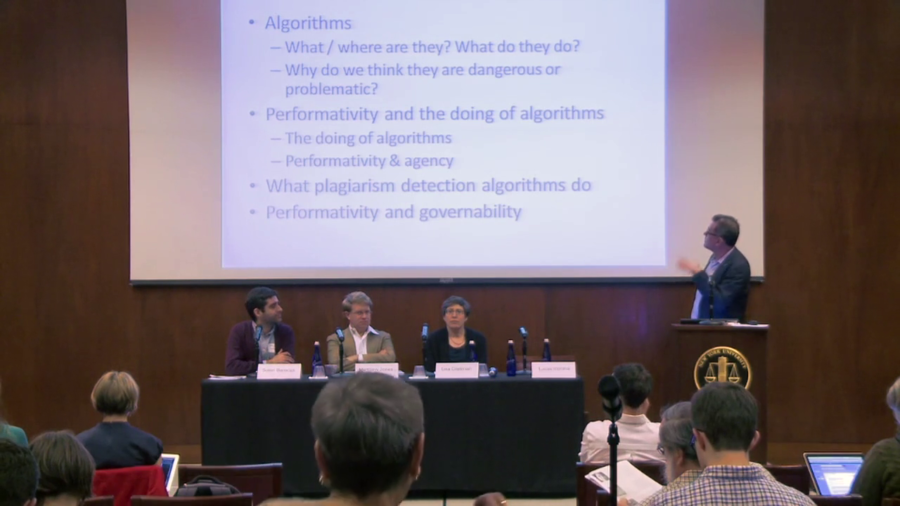

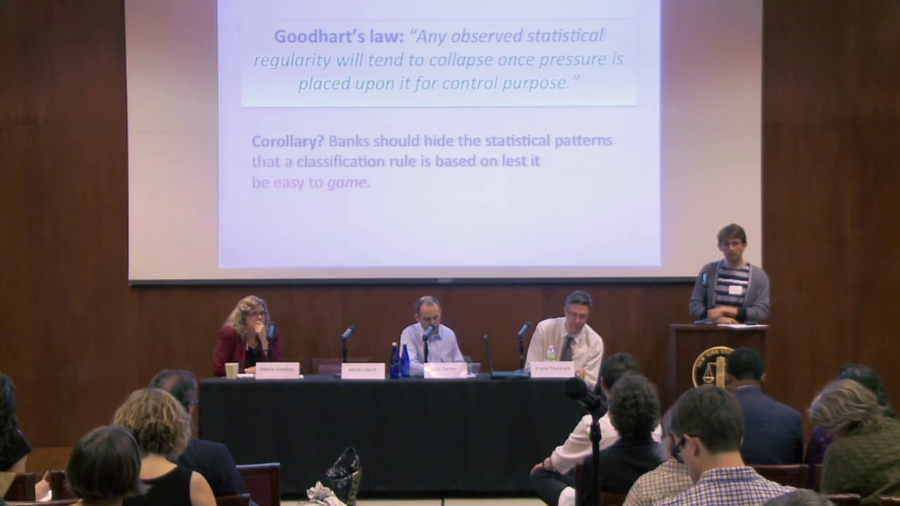

I just want to be clear that I’m not saying that the details of the algorithms are irrelevant. In a way they can matter very much, and you know, in a certain circumstance, in a certain situated use, it might matter significantly what the algorithm does but we can’t say that a priori. So we need to both open up the algorithms, we need to understand them as much as possible, but we must not be seduced to believe that if we understand them therefore we know what they do.

We can’t govern through knowledge, properly speaking. Even if many algorithms are trade secrets, Lucas and others have reminded us nearly all would not be surveillable by human beings, even if we had access to their source code. We have to begin whatever process from this fundamental lack of knowledge. We need to start from the same epistemological place that many of the producers of algorithms do.

Of all the different issues we face, three problems pose existential challenges to our species. These three existential challenges are nuclear war, ecological collapse, and technological disruption. We should focus on them.

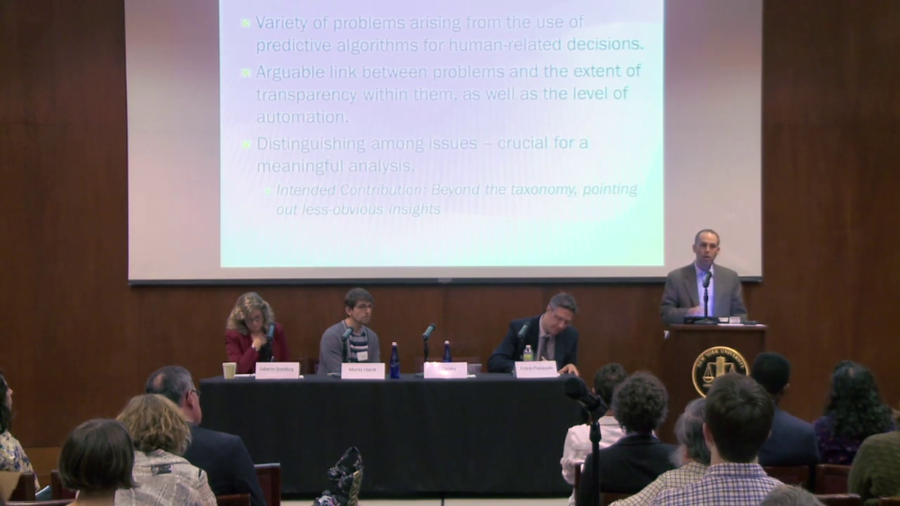

When you make a decision to opt for an automated process, to some extent you’re already by doing so compromising transparency. Or you could say it the other way around. It’s possible to argue that if you opt for extremely strict transparency regulation, you’re making a compromise in terms of automation.

More than sort of a discussion of what’s been said so far this is a kind of research proposal of what I would like to see happening at the intersection of CS and this audience.

The study of search, be it by people like David Stark in sociology, or economists or others, I tend to sort of see it in the tradition of a really rich socio-theoretical literature on the sociology of knowledge. And as a lawyer, I tend to complement that by thinking if there’s problems, maybe we can look to the history of communications law.