Where I come from and where the rest of the world is, the issue’s not intelligence agencies having backdoor access to our data. Yes, they do have backdoor access to our data, but they also have front door access to our data.

Archive (Page 1 of 5)

I think he would to some extent be surprised that business has hijacked the Internet in a certain sense. That the entertainment industry…I’ll just pick on them but other industries too, that’ve basically exploited that sort of delivery vehicle that was made not really with them in mind but they have gained such a dominant position in dictating how and where the Internet goes.

One very interesting addition to the public space is how we are conditioning and defining the public space with regards to eventual attacks. And it’s changing the landscape radically. And the very first knee-jerk reaction was concrete blocks in front of many institutions. Now they’re trying to design these concrete blocks so they seem something which is part of the landscape but the presence and the robustness is still so violent that it’s hard to hide the intention.

Rather than begrudgingly pushing society forward to be ready, I ask designers to critically reflect on the limitations of their own design practices and to remember that to design for one intersection of society—namely, affluent middle-to-upper-class white American men—does not mean that those designs will work for those who do not identify as such. Even with modifications.

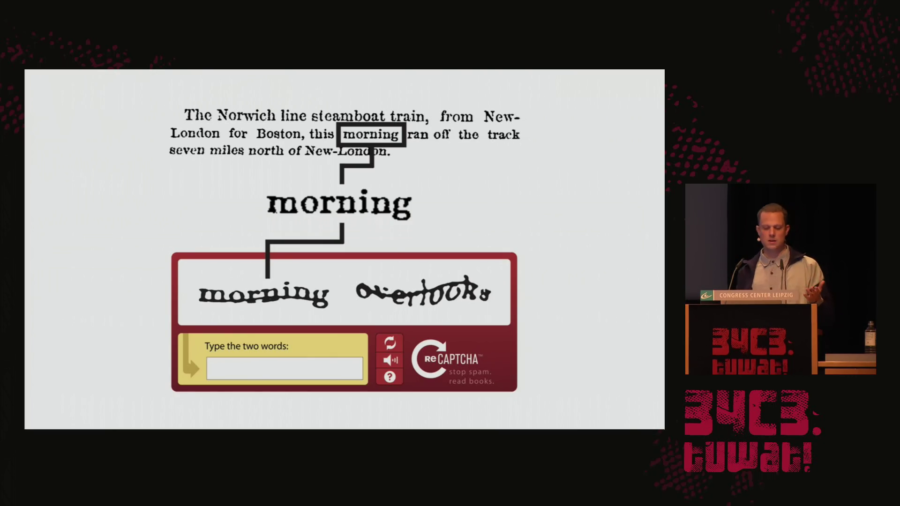

What is this condition? I would summarize it as people extending computational systems by offering their bodies, their senses, and their cognition. And specifically, bodies and minds that can be easily plugged in and later easily be discarded. So bodies and minds algorithmically managed and under the permanent pressure of constant availability, efficiency, and perpetual self-optimization.

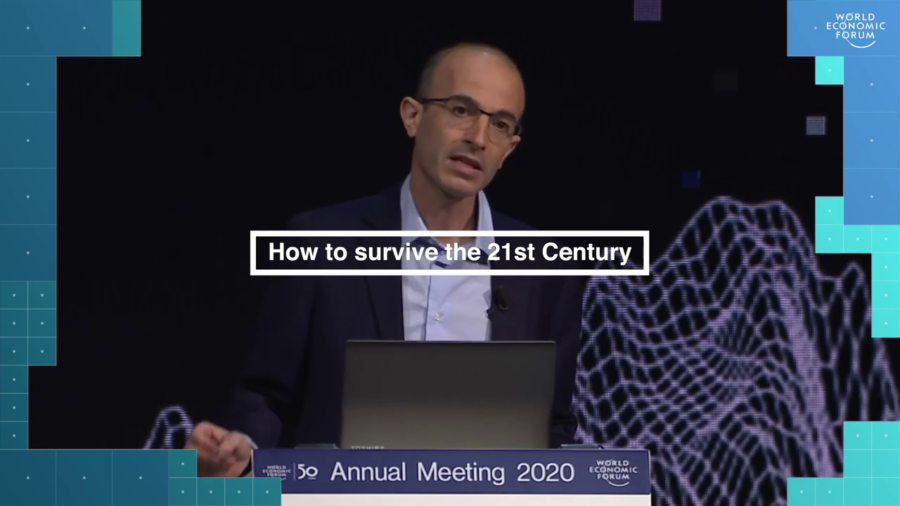

Of all the different issues we face, three problems pose existential challenges to our species. These three existential challenges are nuclear war, ecological collapse, and technological disruption. We should focus on them.

Citizenship, after not thinking about it for a while, feels like something we’re all thinking about quite a lot these days. In the words of Hannah Arendt, citizenship is the right to have rights. All of your rights essentially descend from your citizenship, because only countries will protect those rights.

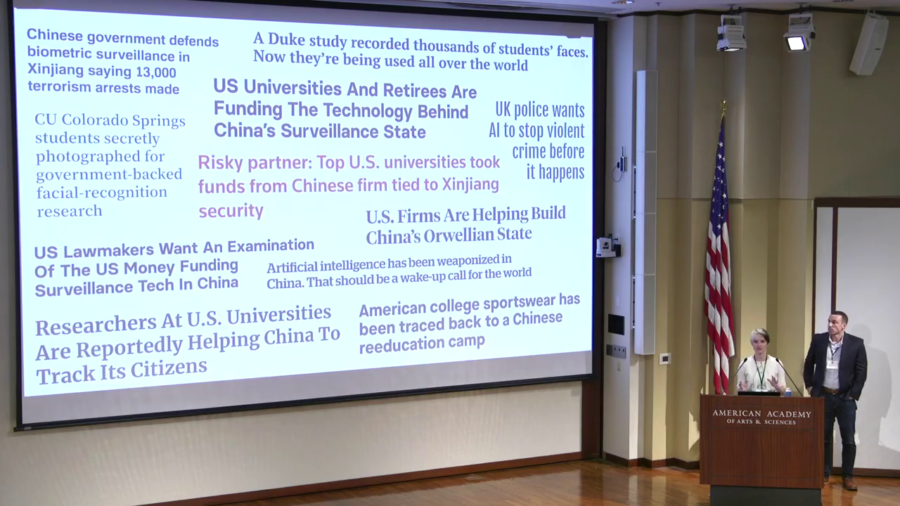

We wanted to look at how surveillance, how these algorithmic decisionmaking systems and surveillance systems feed into this kind of targeting decisionmaking. And in particular what we’re going to talk about today is the role of the AI research community. How that research ends up in the real world being used with real-world consequences.