This is a moment to ask as we make the planet digital, as we totally envelop ourselves in the computing environment that we’ve been building for the last hundred years, what kind of digital planet do we want? Because we are at a point where there is no turning back, and getting to ethical decisions, values decisions, decisions about democracy, is not something we have talked about enough nor in a way that has had impact.

Archive (Page 3 of 5)

In a world of conflicting values, it’s going to be difficult to develop values for AI that are not the lowest common denominator.

[The] question of what happens when blackness enters the frame can kind of neatly encapsulate the ways I’ve been thinking and trying to talk about surveillance for the last few years.

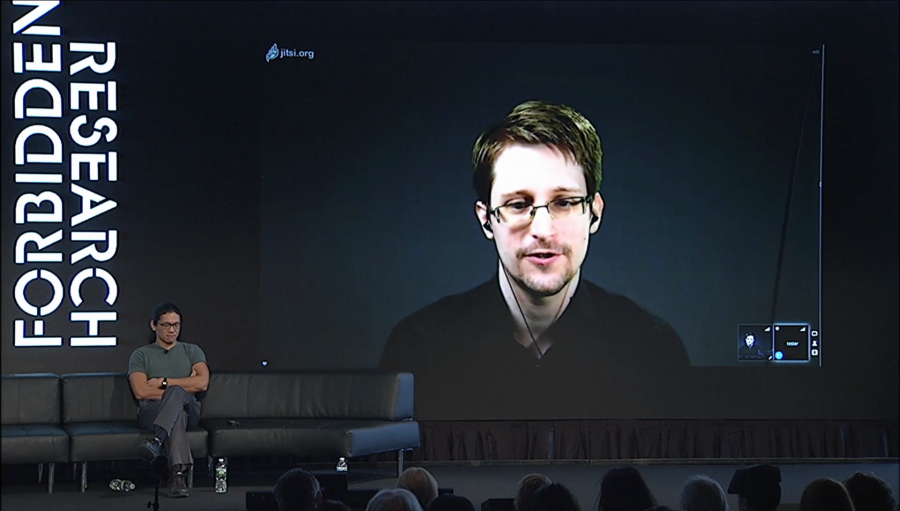

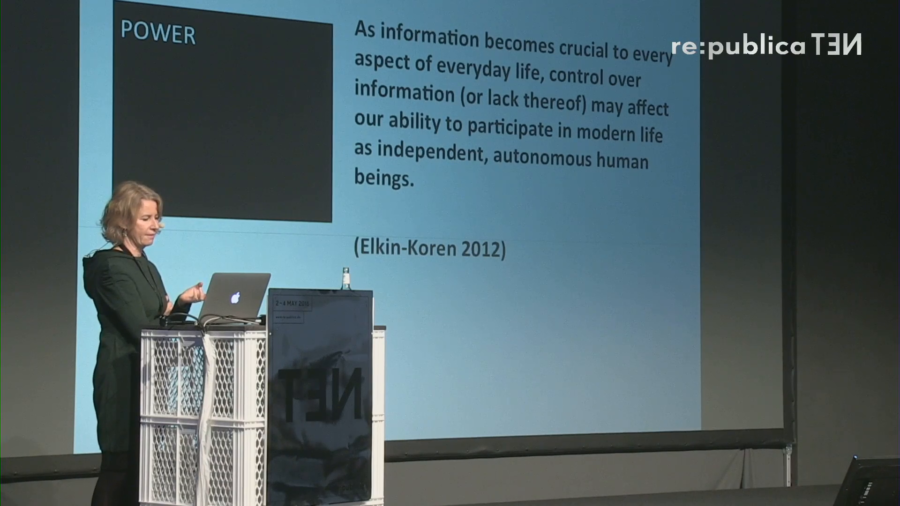

What does it mean for human rights protection that we have large corporate interests—the Googles, the Facebooks of our time—that control and govern a large part of the online infrastructure?

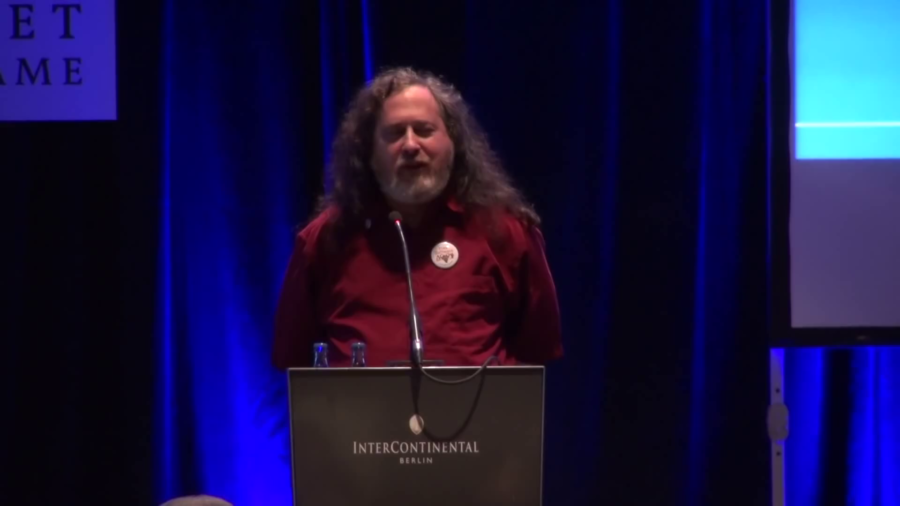

So, thirty years ago if you wanted to get a new computer and use it you had to surrender your freedom by installing a user-subjugating proprietary operating system. So I decided to fix that by developing another operating system and make it free, and it’s called GNU, but most the time you’ll hear people erroneously calling it Linux.

Are there any limits to the connected workplace? Are there any concerns about the connected workplace? Is there any way in which you wouldn’t want either yourself or an employee to be connected? Are there any limits to the kinds of information we can gather in order to make our workforces more productive? In order to make our overall society more productive?