How people think about AI depends largely on how they know AI. And to the point, how the most people know AI is through science fiction, which sort of raises the question, yeah? What stories are we telling ourselves about AI in science fiction?

Archive

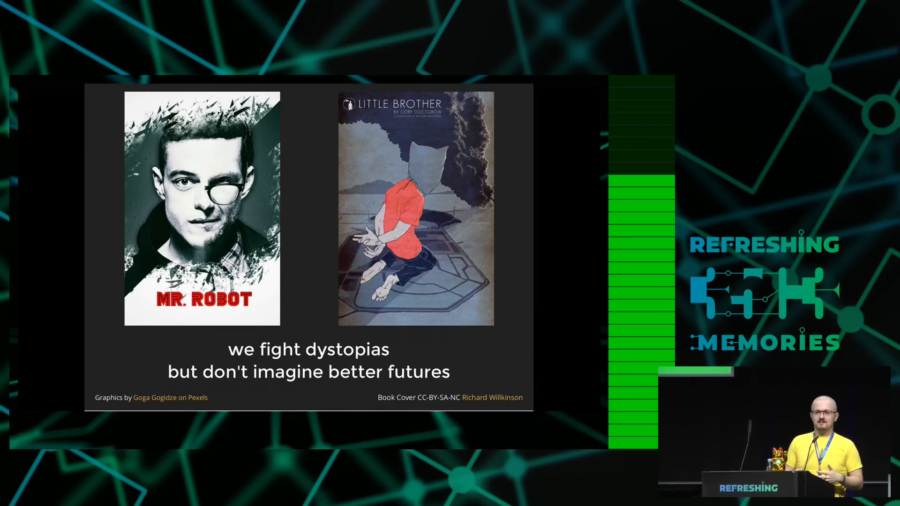

We have a lot of proposals on how technology should work in this society, how we want to avoid all the dangers we can see that others cannot see. But we do a very very bad job at communicating it.

The Center, one of our core goals, our mission statement, is to get people thinking more creatively and ambitiously about the future. What I mean when I talk about that is that we need to come up with better stories about the future. If you want to build a better world you have to imagine that world first.

Computers can tell stories but they’re always stories that humans have input into a computer, which are then just being regurgitated. But they don’t make stories up on their own. They don’t really understand the stories that we tell. They’re not kind of aware of the cultural importance of stories. They can’t watch the same movies or read the same books we do. And this seems like this huge missing gap between what computers can do and humans can do if you think about how important storytelling is to the human condition.

In Shelley’s vision, Frankenstein was the modern Prometheus. The hip, up to date, learned, vital god who chose to create human life and paid the dire consequences. To Shelley, gods create and for humans to do that is bad. Bad for others but especially bad for one’s creator.

The future is on the whole a wonderful thing because it will bring us new things that we haven’t seen before. And that’s why we stick around.

Most companies […] don’t deliberately want to be malicious in the technology that they’re making. However, if you don’t think about the fact that your solution is not the only one, and is going to enter into a whole host of different things, then you are going to end up causing problems and it might as well be malice.

So the kind of technologies that get made are not necessarily very exciting. It’s something that [Alexis] Madrigal of The Atlantic said, these technologies that are coming out of these startups, they’re nice, they’re cheap, they’re fun. And they’re about as world-changing as another variation of beer pong. This is not big, radical change.