The very first line of any knitting pattern looks something like this, “Cast on 24 stitches.” And that means to create that initial row of loops on the needle. Well I looked at that and my brain immediately recognized well that’s just a for loop.

Archive (Page 1 of 3)

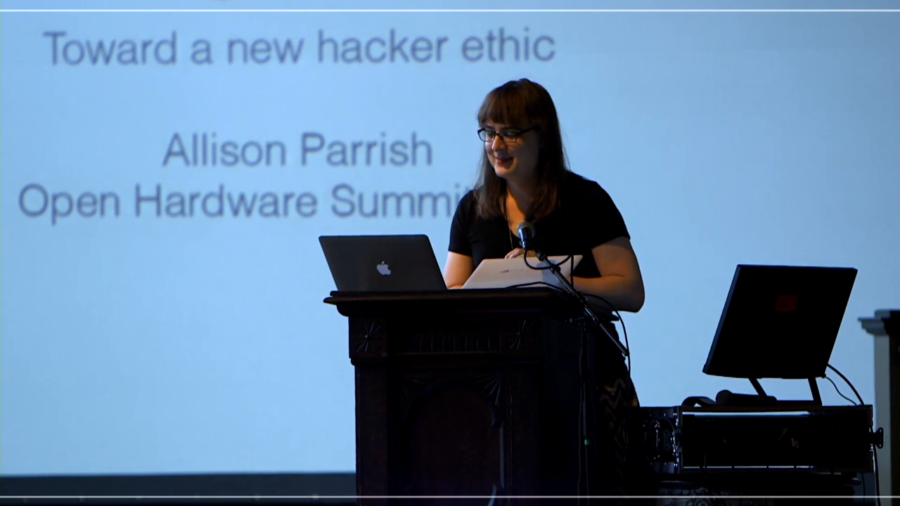

The culture gap at the center of the debate we’re having today is a culture gap between people who build hardware and people who build software. And those cultures have been diverging since the 1950s.

I’m going to teach you how to run your open source project in a fascist style. So friends, Ruby programmers, listen up. I discovered a revolution, a revolution in marketing open source. A revolution in marketing social media marketing. A revolution in promotion better than guy-liner. A revolution in you. It will change your life. It will change everyone’s life. The revolutionary technique is fascist propaganda.

I wouldn’t be surprised to find out that many of us here today like to see our work as a continuation of say the Tech Model Railroad Club or the Homebrew Computer Club, and certainly the terminology and the values of this conference, like open source for example, have their roots in that era. As a consequence it’s easy to interpret any criticism of the hacker ethic—which is what I’m about to do—as a kind of assault.

When I go talk about this, the thing that I tell people is that I’m not worried about algorithms taking over humanity, because they kind of suck at a lot of things, right. And we’re really not that good at a lot of things we do. But there are things that we’re good at. And so the example that I like to give is Amazon recommender systems. You all run into this on Netflix or Amazon, where they recommend stuff to you. And those algorithms are actually very similar to a lot of the sophisticated artificial intelligence we see now. It’s the same underneath.

I’m interested in what happens when artists who are used to being artists decide that the best place for a work is within a space that seems to require an entirely different method of construction. And of course, there’s no harsh line between forms, and plenty of people exist both as highly-proficient working artists and exceptionally skilled programmers. Tons of them, right? But I’m not talking so much about the skill or even background. Instead of I’m interested in mentality.

We’ve been building autonomous vehicles for about twenty-five years, and now that the technology has become adopted much more broadly and is on the brink of being deployed, our earnest faculty who’ve been looking at it are now really interested in questions like, a car suddenly realizes an emergency, an animal has just jumped out at it. There’s going to be a crash in one second from now. Human nervous system can’t deal with that fast enough. What should the car do?

I think the part that engages students that are from underrepresented ethnic groups is missing. I think they don’t see themselves reflected, don’t see their interests or their cultures reflected, so they stay outside of it even if it’s free, or even if it’s something that is in their neighborhood.