We should use our toolbox to make complexity understandable. We need to use the tools at our disposal to build data literacy by showing the context that data exists in. Because with that data, and with context around the data, we’ll be able to build understanding…

Archive (Page 1 of 2)

The premise of our project is really that we are surrounded by machines that are reading what we write, and judging us based on whatever they think we’re saying.

Positionality is the specific position or perspective that an individual takes given their past experiences, their knowledge; their worldview is shaped by positionality. It’s a unique but partial view of the world. And when we’re designing machines we’re embedding positionality into those machines with all of the choices we’re making about what counts and what doesn’t count.

AI Blindspot is a discovery process for spotting unconscious biases and structural inequalities in AI systems.

I think the question I’m trying to formulate is, how in this world of increasing optimization where the algorithms will be accurate… They’ll increasingly be accurate. But their application could lead to discrimination. How do we stop that?

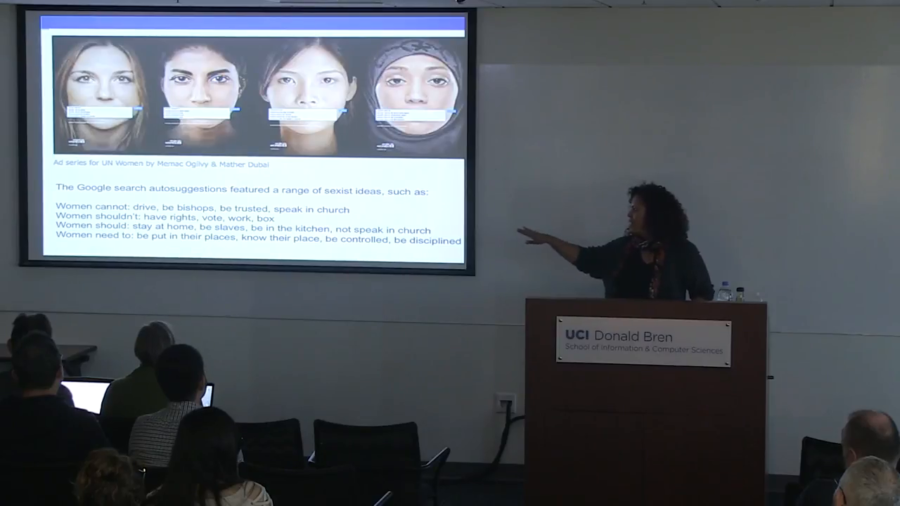

All they have to do is write to journalists and ask questions. And what they do is they ask a journalist a question and be like, “What’s going on with this thing?” And journalists, under pressure to find stories to report, go looking around. They immediately search something in Google. And that becomes the tool of exploitation.

One of the things that I think is really important is that we’re paying attention to how we might be able to recuperate and recover from these kinds of practices. So rather than thinking of this as just a temporary kind of glitch, in fact I’m going to show you several of these glitches and maybe we might see a pattern.

I’ve experienced first hand the challenges of trying to correct misinformation, and in part my academic research builds on that experience and tries understand why it was that so much of what we did at Spinsanity antagonized even those people who were interested enough to go to a fact-checking web site.

What is it about our brains that makes facts so challenging, so odd and threatening? Why do we sometimes double down on false beliefs? And maybe why do some of us do it more than others?