We as a society have to decide whether or not the ability to access and change our brains is something that we want, that we’re going to embrace, or something that we’re going to put limits on.

Archive

In the next ten years we will see data-driven technologies reconfigure systems in many different sectors, from autonomous vehicles to personalized learning, predictive policing, to precision medicine. While the advances that we will see will create phenomenal new opportunities, they will also create new challenges—and new worries—and it behooves us to start grappling with these issues now so that we can build healthy sociotechnical systems.

When you make a decision to opt for an automated process, to some extent you’re already by doing so compromising transparency. Or you could say it the other way around. It’s possible to argue that if you opt for extremely strict transparency regulation, you’re making a compromise in terms of automation.

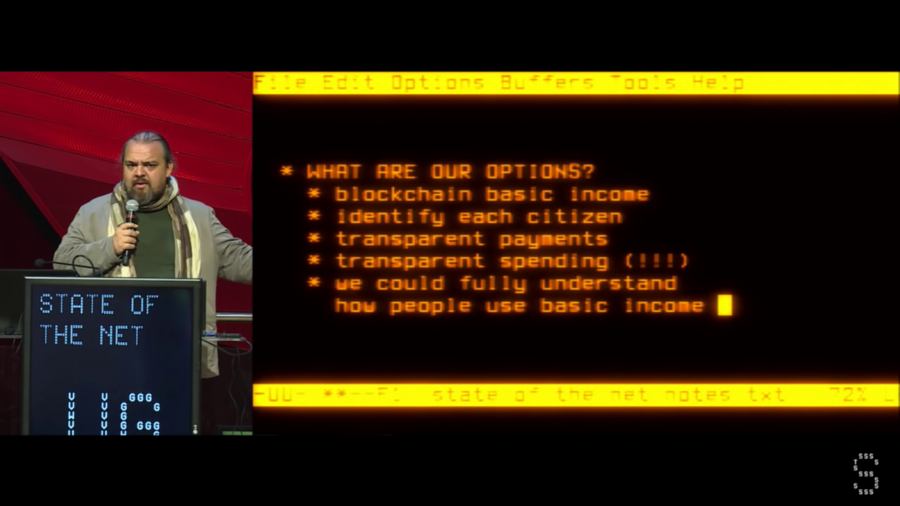

Everybody thinks of bureaucrats as being kind of a neutral force. But I’m going to make the case that bureaucrats are in fact a very strongly negative force, and that automating the bureaucratic functions inside of our society is necessary for further human progress.

In a world of conflicting values, it’s going to be difficult to develop values for AI that are not the lowest common denominator.

I think we are groping towards this idea of truth. And even the word truth can be defined in multiple different ways. So we are by its very nature dealing with a very slippery topic.

We want to sort of bring you all up to speed on some of the things that we’ve been thinking about, some of the conversations we’ve been having that I’ve had to edit out of the tail ends of episodes, link a few concepts and also be… Well, first because we think it’s really important to be sort of transparent about where we’re going with the series and the conversations we’re having.

This is why it matters whether algorithms can be agonist, given their roles in governance. When the logic of algorithms is understood as autocratic, we’re going to feel powerless and panicked because we can’t possibly intervene. If we assume that they’re deliberately democratic, we’ll assume an Internet of equal agents, rational debate, and emerging consensus positions, which probably doesn’t sound like the Internet that many of us actually recognize.