So how did this start? Actually all of us—Solon, Sophie, and many other fellows and research, not just at PRG, the Information Law Institute, but also at MCC—we’ve been studying computation, automation, and control in different forms for quite a long time. But it was only at the end of last summer really that we realized that there’s this new notion of the algorithm gaining currency.

Archive (Page 1 of 2)

What I’m going to do today is situate digital methods as an approach, as an outlook, in the history of Internet-related research. I’d like to divide up the history of Internet research largely into three eras, the first being where we thought of the Web as a kind of cyberspace.

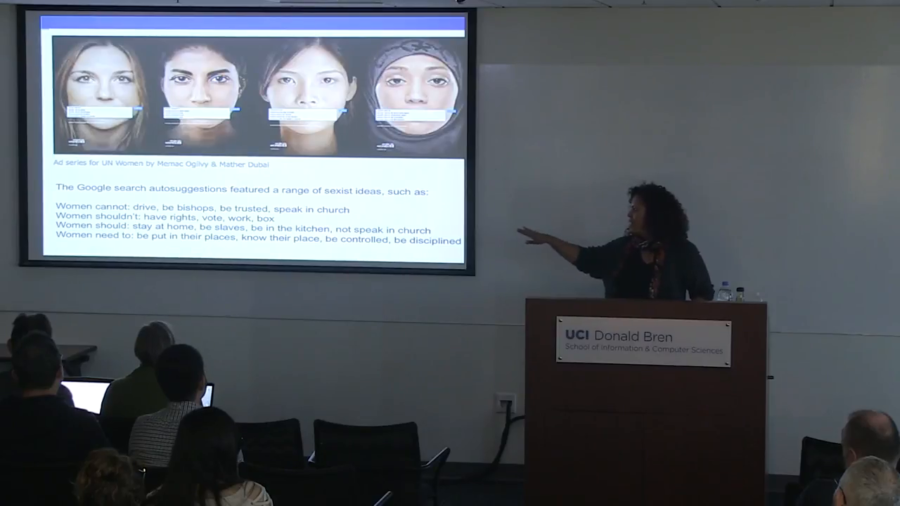

One of the things that I think is really important is that we’re paying attention to how we might be able to recuperate and recover from these kinds of practices. So rather than thinking of this as just a temporary kind of glitch, in fact I’m going to show you several of these glitches and maybe we might see a pattern.

We’ve already been through several situations where new technologies come along. The Industrial Revolution removed a large number of jobs that had been done by hand, replaced them with machines. But the machines had to be built, the machines had to be operated, the machines had to be maintained. And the same is true in this online environment.

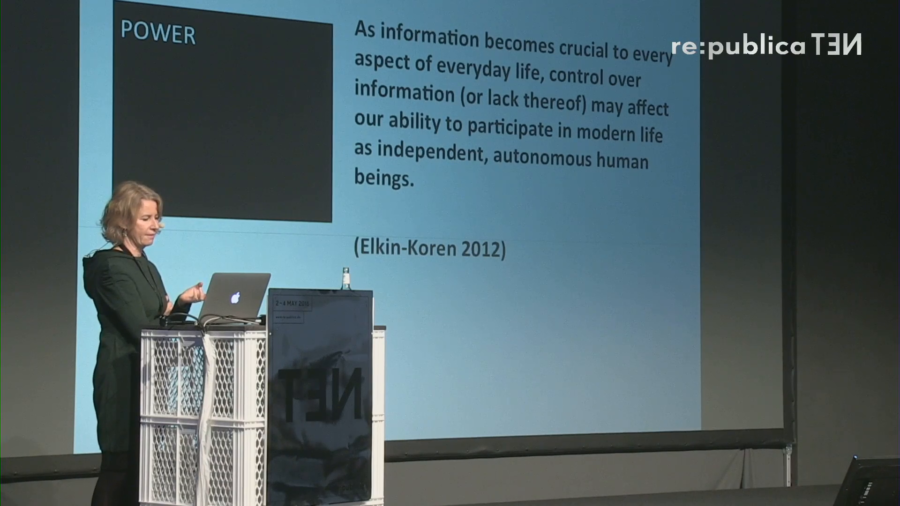

What does it mean for human rights protection that we have large corporate interests—the Googles, the Facebooks of our time—that control and govern a large part of the online infrastructure?

There’s already a kind of cognitive investment that we make, you know. At a certain point, you have years of your personal history living in somebody’s cloud. And that goes beyond merely being a memory bank, it’s also a cognitive bank in some way.

Google just has to grow. It has to keep growing. But Google grows at its own peril. Google grew so much that what happened? It outgrew Google. Google had to become what? Alphabet. Now what is Alphabet? Alphabet is not Google. Alphabet is a holding company. So Google’s new business as Alphabet is to do what? It’s to buy and sell technology companies. So, once a company becomes just too big to flip anymore, it becomes a flipper of other companies.