So how did this start? Actually all of us—Solon, Sophie, and many other fellows and research, not just at PRG, the Information Law Institute, but also at MCC—we’ve been studying computation, automation, and control in different forms for quite a long time. But it was only at the end of last summer really that we realized that there’s this new notion of the algorithm gaining currency.

Archive

In the next ten years we will see data-driven technologies reconfigure systems in many different sectors, from autonomous vehicles to personalized learning, predictive policing, to precision medicine. While the advances that we will see will create phenomenal new opportunities, they will also create new challenges—and new worries—and it behooves us to start grappling with these issues now so that we can build healthy sociotechnical systems.

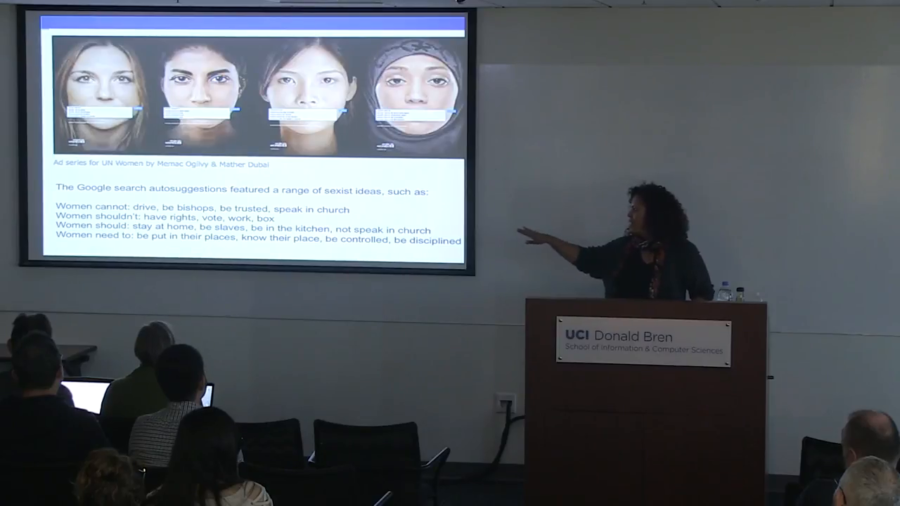

All they have to do is write to journalists and ask questions. And what they do is they ask a journalist a question and be like, “What’s going on with this thing?” And journalists, under pressure to find stories to report, go looking around. They immediately search something in Google. And that becomes the tool of exploitation.

One of the things that I think is really important is that we’re paying attention to how we might be able to recuperate and recover from these kinds of practices. So rather than thinking of this as just a temporary kind of glitch, in fact I’m going to show you several of these glitches and maybe we might see a pattern.

The question is what are we doing in the industry, or what is the machine learning research community doing, to combat instances of algorithmic bias? So I think there is a certain amount of good news, and it’s the good news that I wanted to focus on in my talk today.

Quite often when we’re asking these difficult questions we’re asking about questions where we might not even know how to ask where the line is. But in other cases, when researchers work to advance public knowledge, even on uncontroversial topics, we can still find ourselves forbidden from doing the research or disseminating the research.